Generalist robot policies are now trained on increasingly large, heterogeneous cross-embodiment datasets. While scale clearly helps, it remains unclear what is actually being transferred when we mix data from many robots, morphologies, and viewpoints.

This work asks: what kinds of cross-embodiment data actually help a policy adapt to a new robot under a fixed budget of target demonstrations?

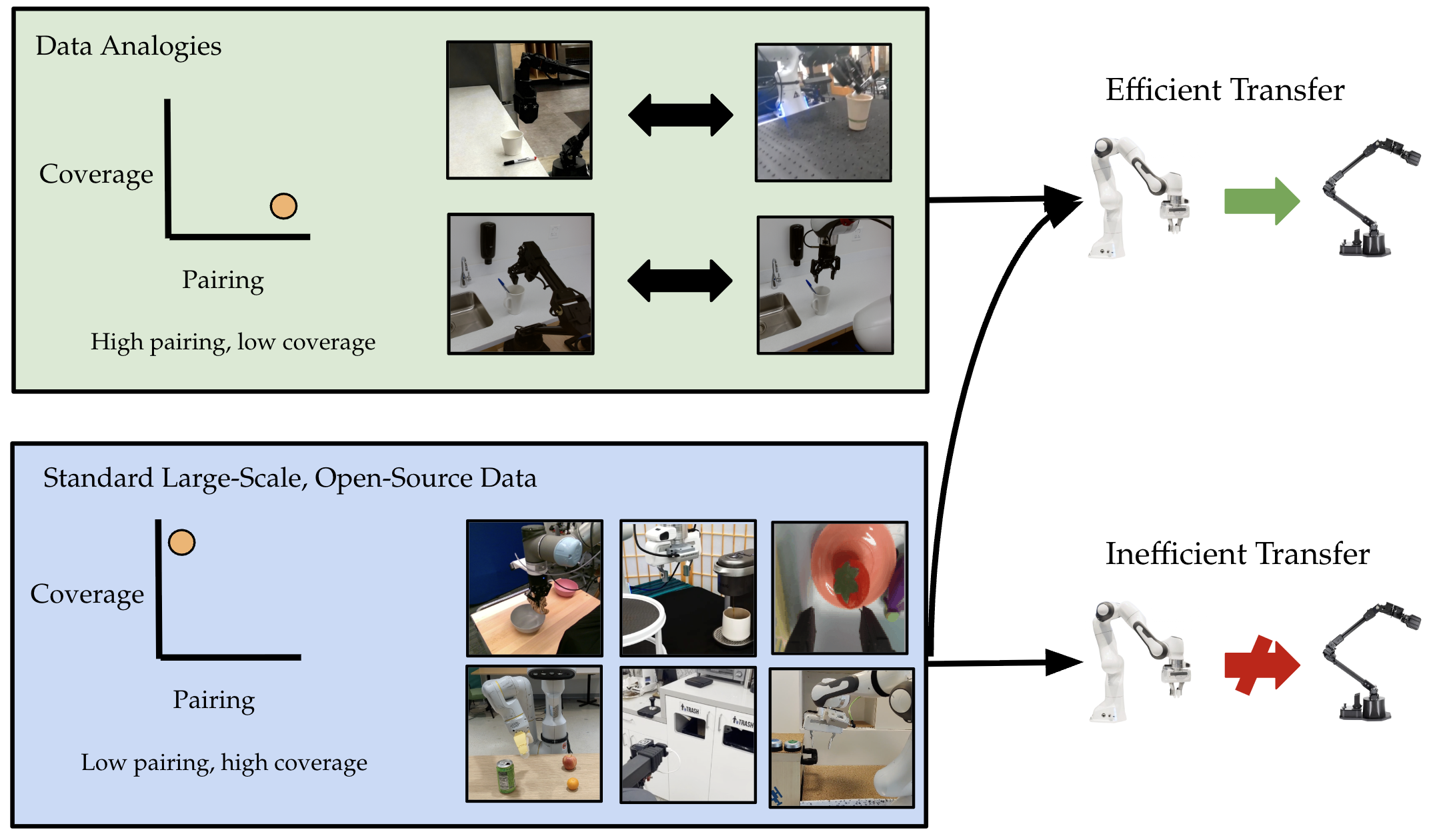

We introduce the notion of data analogies, structured correspondences that let demonstrations from one embodiment efficiently help another, and systematically study how data collection along three axes (viewpoint, morphology, appearance) affects this capability.

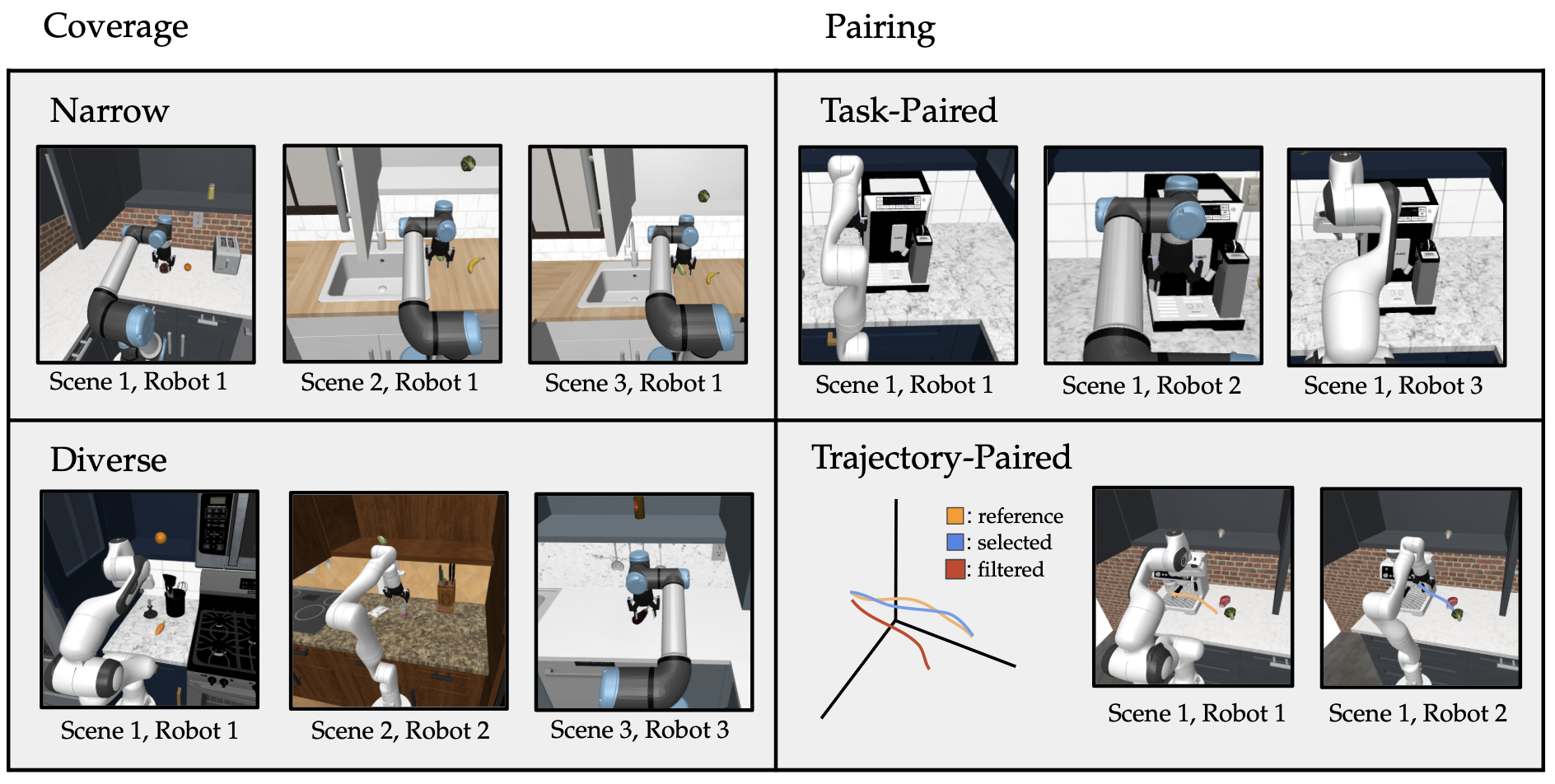

We view data for cross-embodiment learning through two orthogonal design axes: coverage and pairing.

Coverage vs. Pairing. Simulation scenes illustrating how diversity and cross-robot alignment shape transfer.

We evaluate Pi0.5-style VLA policies on RoboCasa-based simulation benchmarks and tabletop tasks on Franka, WidowX, PiperX, and related platforms, under strict few-shot constraints on the target robot, to study efficient cross-embodiment learning.

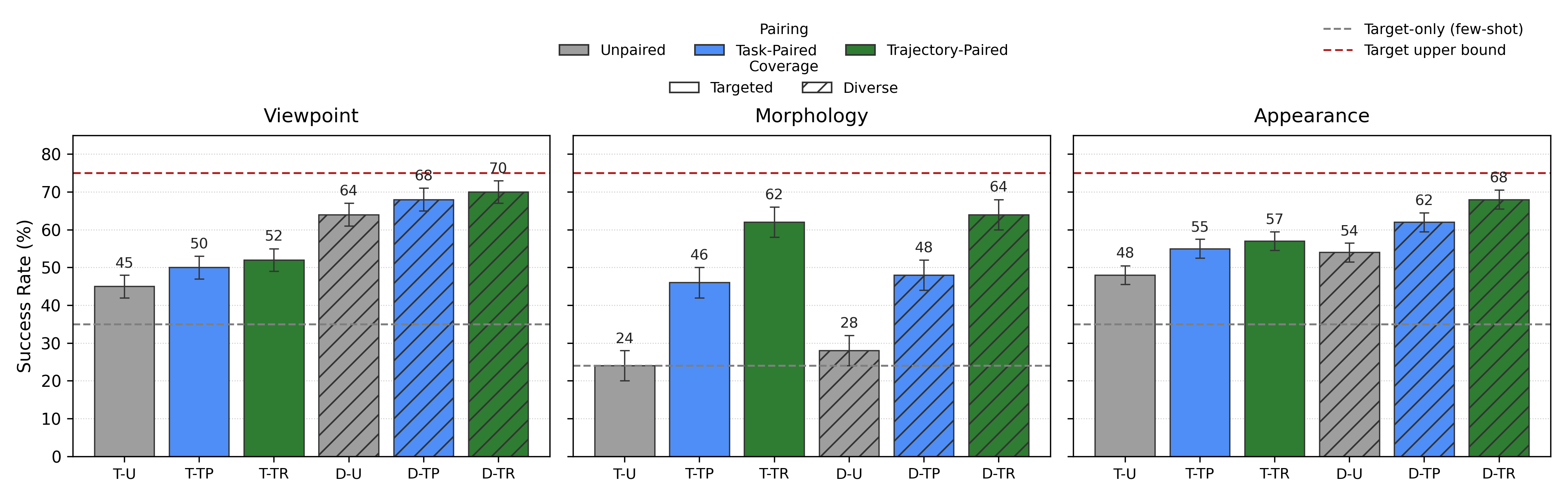

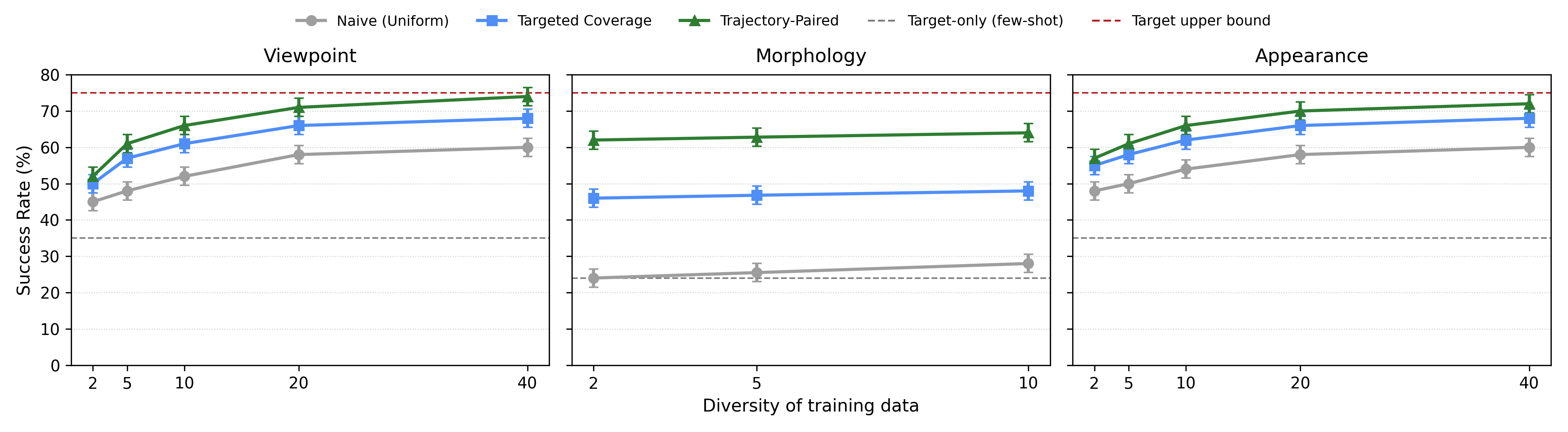

Diversity helps most for perceptual shifts (viewpoint and appearance), where broad variation regularizes the encoder and reduces overfitting to specific scenes or cameras. For morphology, however, diversity alone quickly saturates: targeted coverage and strong trajectory pairing are essential to bridge action-space differences.

Pairing is Important.

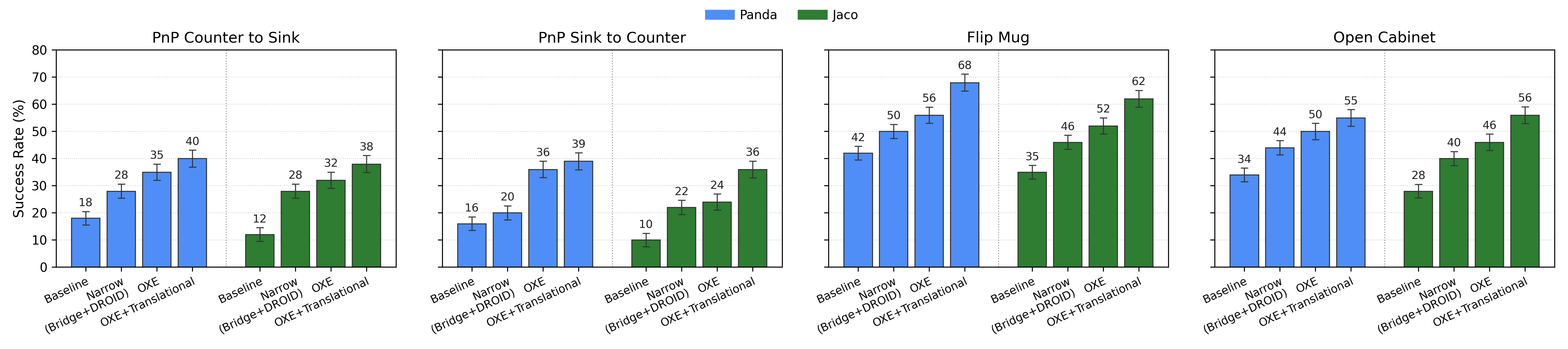

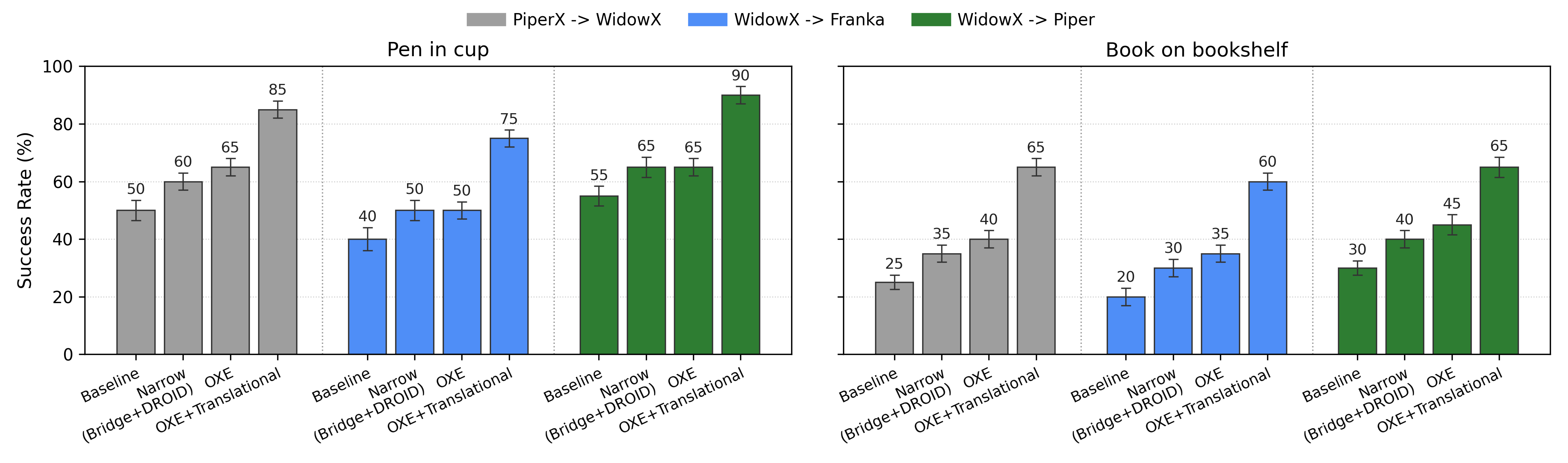

Training on large unpaired datasets like OXE provides strong baselines, but our method delivers large gains in both simulation and real-world evaluations.

Figure 2. Composed OXE+Translational data outperforms both narrow two-robot pools and large unpaired OXE training.

Figure 3. Scaling behavior as we increase the diversity of source embodiments, viewpoints, and scenes.

Example policy rollouts across different embodiments and viewpoints.

We validate our findings on real robot platforms including Franka, WidowX, and PiperX in kitchen-like scenes. Structured coverage and trajectory pairing reliably improve success by 25-40 points over baselines.

Simulation robots. Diverse simulated embodiments and viewpoints.

Real robots. Physical setups with Franka, WidowX, PiperX.

Figure 4. Real-world transfer results across multiple robot pairs and tasks.

This website summarizes the main ideas, methods, and results from our paper on Data Analogies Enable Efficient Cross-Embodiment Learning. Please refer to the full paper PDF for complete details, ablations, and experimental tables.

@misc{yang2026dataanalogiesenableefficient,

title={Data Analogies Enable Efficient Cross-Embodiment Transfer},

author={Jonathan Yang and Chelsea Finn and Dorsa Sadigh},

year={2026},

eprint={2603.06450},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2603.06450},

}